By Olin Coles

Manufacturer: NVIDIA Corporation

Product Name: GeForce GTX 980 Graphics Card

Price: Starting at $534.99 (Amazon | B&H Photo)

Full Disclosure: The product sample used in this article has been provided by NVIDIA.

NVIDIA tends to dominate the field when it comes to graphics processing power, leaving AMD to reduce prices across their product line to remain competitive. Recently the AMD Radeon R9 290X became AMD’s flagship graphics card, and although powerful, it consumed plenty of electricity and was quite noisy during operation. NVIDIA answered back with GeForce GTX 780 Ti, which left no doubt who controls the top-end of discreet graphics. Not satisfied with producing the world’s most powerful graphics card, NVIDIA set out to produce an even better product that would deliver improved efficiency and reduced operating noise for gamers. The end result was their Maxwell-based GeForce GTX 980 graphics card.

GeForce GTX 980 is no one-trick pony; as it targets much more than simply delivering high frame rate performance. Sure, Maxwell’s new GPU datapath architecture translates into a 40% delivered performance increase per CUDA core, but it also delivers a host of additional features not seen or available from the competition. Four simultaneous Ultra HD 4K display heads are supported by GTX 980, utilizing DisplayPort 1.2 and HDMI 2.0 connections. While that may open the eyes to a bigger picture, GeForce GTX 980 makes that picture better using NVIDIA Voxel Global Illumination (VXGI). All of these new features pair nicely with: G-SYNC technology, which eliminates screen tearing and display-generated stutter, FXAA and TXAA post-processing effects that smooth rough edges and soften their graphical appearance, and always-on NVIDIA ShadowPlay that captures real-time gaming action in 1080p.

Codenamed NVIDIA Maxwell, GM204 GPUs debut the company’s 10th-generation graphics processing architecture. Maxwell improves upon all previously released processor designs, and delivers greater performance while reducing energy consumption. Anticipated as the replacement for GTX 680, the new GeForce GTX 980 yields up to 200% performance increase (per watt) while requiring less power. Voxel Global Illumination and VR Direct technologies have been added to improve upon picture quality and virtual reality immersion. In this article, Benchmark Reviews tests the GeForce GTX 980 graphics card against today’s best gaming solutions.

- 200% performance per watt increase over GK104 on GeForce GTX 680

- Improved process scheduler

- New datapath organization

- 40% delivered performance increase per CUDA core

Multi-Frame Sampled AA (MFAA)

Anti-aliasing technique that alternates sample patterns both temporally and spatially to produce a perceived higher-quality AA while maintaining the performance penalty of lower-quality AA.

Dynamic Super Resolution (DSR)

Down-sampling technology with a 13-tap Gaussian filter effect that reduces the artifacts introduced by anti-aliasing during the process.

Voxel Global Illumination (VXGI)

Maxwell-accelerated real-time global illumination engine that generates efficient dynamic geometry and lighting, one-bounce indirect diffusion, reflections, and area lights. Available on UE4 and other modern engines near Q4-2014.

VR Direct

Low-latency virtual reality, with VR SLI, VR DSR, MSAA, auto-asynchronous warp, and auto-stereo.

4K NVIDIA ShadowPlay

Utilizing the H.264 video encoder built-in to every Maxwell GPU, ShadowPlay works in the background, seamlessly recording your gameplay footage. Compared to Kepler GPUs, Maxwell delivers a 250% increase in efficiency, allowing gamers to encode 4K footage at 60 FPS.

NVIDIA GeForce Experience

GeForce Experience is a new application from NVIDIA that optimizes your PC in two key ways. First, it maximizes your game performance and game compatibility by automatically downloading the latest GeForce Game Ready drivers. Second, GeForce Experience intelligently optimizes graphics settings for all your favorite games based on your hardware configuration.

Download NVIDIA GeForce Experience here: geforce.com/drivers/geforce-experience/download

GeForce GTX 980 is a top-end discrete graphics card for high-performance desktop computer gaming systems, available for $549.99 (MSRP) online. NVIDIA has built the GeForce GTX 980 specifically for hardware enthusiasts and hard-core gamers wanting to play PC video games at their maximum graphics quality settings using the highest screen resolution possible on multiple displays. It’s a small niche market that few can claim, but also one that every PC gamer dreams of enjoying.

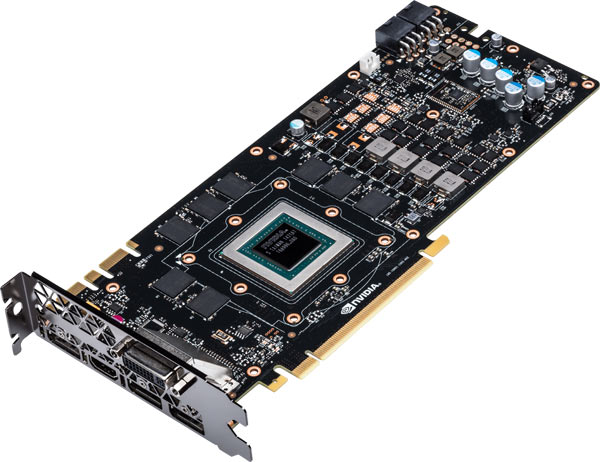

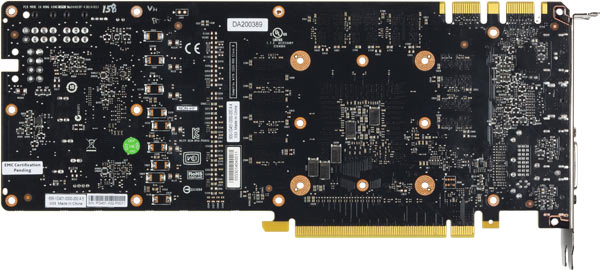

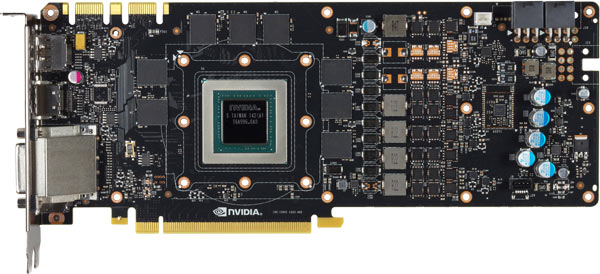

Like the GeForce GTX TITAN it’s modeled after, GeForce GTX 980 is a dual-slot video card that measures 10.5″ long and 4.4″ wide. Nearly identical to GTX TITAN and the GTX 780 series, GeForce GTX 980 also shares support for the following NVIDIA technologies: GPU Boost 2.0, 3D Vision, CUDA, DirectX 11, PhysX, TXAA, Adaptive VSync, FXAA, 3D Vision Surround, and SLI.

In addition to an improved NVIDIA GPU Boost 2.0 technology, GeForce GTX 980 also delivers refinements to the user experience. Multi-Frame Sampled AA (MFAA) joins FXAA and TXAA, while adaptive vSync technology results in less chop, stutter, and tearing in on-screen motion. Adaptive vSync technology adjusts the monitor’s refresh rate whenever the FPS rate becomes too low to properly sustain vertical sync (when enabled), thereby reducing stutter and tearing artifacts. NVIDIA MFAA is an anti-aliasing technique produces perceived higher-quality effects while maintaining the performance penalty of lower-quality post processing. TXAA offers gamers a film-style anti-aliasing technique with a mix of hardware post-processing, custom CG file style AA resolve, and an optional temporal component for better image quality.

Fashioned from the NVIDIA GeForce GTX TITAN and GTX 780 series, engineers adapted a slightly tweaked design for GeForce GTX 980. The cards look virtually identical, save for the model name branded near the header. A single rearward 60mm (2.4″) blower motor fan is offset from the cards surface to take advantage of a chamfered depression, helping GTX 980 to draw cool air into the angled fan shroud. This design allows more air to reach the intake whenever two or more video cards are combined in close-proximity SLI configurations. Add-in card partners with engineering resources may incorporate their own cooling solution into GTX 980 after the initial launch cycle has passed, however there seems little benefit from eschewing NVIDIA’s cool-running reference design.

GeForce GTX 980 offers a dual-link DVI (DL-DVI) connection, full-size HDMI 2.0 output compatible with 4K displays, and three total DisplayPort 1.2 connections. Add-in partners may elect to remove or possibly further extend any of these video interfaces, but most will likely retain the original reference board engineering. Only one of these video cards is necessary to drive triple-displays and NVIDIA 3D-Vision Surround functionality. All of these video interfaces consume exhaust-vent real estate, but this has very little impact on cooling since the 28nm Maxwell GPU generates much less heat than past GeForce processors, and also because NVIDIA intentionally distances the heatsink far enough from these vents to equalize exhaust pressure.

As with past-generation GeForce GTX series graphics cards, the GeForce GTX 980 is capable of two-card “Quad-SLI” configurations. Because GeForce GTX 980 is PCI-Express 3.0 compliant device, the added bandwidth could potentially come into demand as future games and applications make use of these resources. Most games will be capable of utilizing the highest possible graphics quality settings using only a single GeForce GTX 980 video card, but multi-card SLI/Quad-SLI configurations are perfect for extreme gamers wanting to experience ultra-performance video games played at their highest quality settings with all the bells and whistles enabled across multiple monitors.

Specified at only 165W Thermal Design Power output, the Maxwell GM204 GPU inside GeForce GTX 980 represents NVIDIA’s most efficient and most powerful graphics processor. Since TDP demands are dramatically reduced, GTX 980 runs cooler during normal operation and has move power available for Boost 2.0 requests. NVIDIA has added a “GeForce GTX” logo along the exposed side video card which glow green using LED backlit letters when the system is powered on. GeForce GTX 980 requires two 6-pin PCIe power connectors for operation, allowing NVIDIA to recommend a modest 600W power supply for computer systems equipped with one of these video cards.

By tradition, NVIDIA’s GeForce GTX series offers enthusiast-level performance with features like multi-card SLI pairing. More recently, the GTX family has included GPU Boost application-driven variable overclocking technology – now into GPU Boost 2.0. The GeForce GTX 980 graphics card keeps with tradition in terms of performance by producing single-GPU frame rates second to only GeForce GTX TITAN. Of course, NVIDIA’s Maxwell GPU architecture adds proprietary features to both versions such as: 3D Vision, Adaptive Vertical Sync, multi-display Surround, PhysX, MFAA and TXAA post-processing effects.

NVIDIA GM204 is comprised of 4 Graphics Processing Clusters (GPC), 16 Maxwell Streaming Multiprocessors (SMM), and four 64-bit memory controllers. In GeForce GTX 980, each GPC has it’s own raster engine along with four SMMs. Each of those four SMMs includes 128 CUDA cores, 8 texture units, and its own polymorph engine.

The NVIDIA GM204 inside GeForce GTX 980 consists of 5.2 Billion transistors, and offers 2048 CUDA cores operating at 1126 MHz which typically boosts to 1216 MHz. Assigned to each memory controller are 16 ROP units and 512KB of L2 cache, for a total 64 ROP units and 2MB of combined L2 cache on GTX 980.

After a long absence from the GTX series design, the PCB heatspreader has returned on GeForce GTX 980. To this reviewer the accessory seems unwarranted, considering how the efficient GPU translates into less power consumed and therefore less heat generated. In spite of this, it does help reduce the operating temperatures while also protecting sensitive surface electronics.

In the next section, we detail our test methodology and give specifications for all of the benchmarks and equipment used in our testing process…

The Microsoft DirectX-11 graphics API is native to the Microsoft Windows 7 Operating System, and will be the primary O/S for our test platform. DX11 is also available as a Microsoft Update for the Windows Vista O/S, so our test results apply to both versions of the Operating System.Sapphire Radeon R9 270X Vapor-X Video Card GPUZ

In each benchmark test there is one ‘cache run’ that is conducted, followed by five recorded test runs. Results are collected at each setting with the highest and lowest results discarded. The remaining three results are averaged, and displayed in the performance charts on the following pages.

A combination of synthetic and video game benchmark tests have been used in this article to illustrate relative performance among graphics solutions. Our benchmark frame rate results are not intended to represent real-world graphics performance, as this experience would change based on supporting hardware and the perception of individuals playing the video game.

- Motherboard: ASUS P9X79 Deluxe (Intel X79 Express)

- Processor: Intel Core i7-3960X Extreme Edition (six cores/3300 MHz)

- System Memory: 32GB G.SKILL Ripjaws-Z DDR3-1600

- Power Supply Unit: OCZ Z-Series Gold 850W OCZZ850

- Monitor: Lenovo ThinkVision LT3053p IPS LED-Backlit 30? LCD

- 3DMark11 Professional Edition by Futuremark

- Settings: Performance Level Preset, 1280×720, 1x AA, Trilinear Filtering, Tessellation level 5)

- Aliens vs Predator Benchmark 1.0

- Settings: Very High Quality, 4x AA, 16x AF, SSAO, Tessellation, Advanced Shadows

- Batman: Arkham City

- Settings: 8x AA, 16x AF, MVSS+HBAO, High Tessellation, Extreme Detail, PhysX Disabled

- Battlefield 3

- Settings: Ultra Graphics Quality, FOV 90, 180-second Fraps Scene

- Battlefield 4

- Settings: Ultra Graphics Quality, FOV 70, 180-second Fraps Scene

- Lost Planet 2 Benchmark 1.0

- Settings: Benchmark B, 4x AA, Blur Off, High Shadow Detail, High Texture, High Render, High DirectX 11 Features

- Metro 2033 Benchmark

- Settings: Very-High Quality, 4x AA, 16x AF, Tessellation, PhysX Disabled

- Unigine Heaven Benchmark 3.0

- Settings: DirectX 11, High Quality, Extreme Tessellation, 16x AF, 4x AA

| Graphics Processing Clusters | 4 |

| Streaming Multiprocessors | 16 |

| CUDA Cores (single precision) | 2048 |

| CUDA Cores (double precision) | ??? |

| Texture Units | 128 |

| ROP Units | 64 |

| Base Clock | 1126 MHz |

| Boost Clock | 1216 MHz |

| Memory Clock (Data rate) | 7000 MHz |

| L2 Cache Size | 512KB x4 |

| Total Video Memory | 4096MB GDDR5 |

| Memory Interface | 256-bit |

| Total Memory Bandwidth | 224.4 GB/s |

| Texture Filtering Rate (Bilinear) | 144.1 GigaTexels/sec |

| Fabrication Process | 28 nm |

| Transistor Count | 5.2 Billion |

| Connectors | 1x Dual-Link DVI 1x HDMI 2.0 3x DisplayPort 1.2 |

| Form Factor | Dual Slot |

| Power Connectors | Two 6-pin PCI-E |

| Recommended Power Supply | 600 Watts |

| Thermal Design Power (TDP) | 165 Watts |

| Thermal Threshold | 95° C |

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

- NVIDIA GeForce GTX 580 (772 MHz GPU/1544 MHz Shader/1002 MHz vRAM – Forceware 331.70)

- AMD Radeon HD 7950 (850 MHz GPU/1250 MHz vRAM – AMD Catalyst 14.3)

- NVIDIA GeForce GTX 680 (1006 MHz GPU/1059 MHz Boost/1502 MHz vRAM – Forceware 331.70)

- AMD Radeon HD 7970 (925 MHz GPU/1375 MHz vRAM – AMD Catalyst Catalyst 14.3)

- NVIDIA GeForce GTX 780 (869 MHz GPU/902 MHz Boost/1502 MHz vRAM – Forceware 344.47)

- XFX Radeon R9 290 DD (947 MHz GPU/1250 MHz vRAM – AMD Catalyst 14.7 R3)

- NVIDIA GeForce GTX 780 Ti (875 MHz GPU/928 MHz Boost/1750 MHz vRAM – Forceware 344.47)

- MSI Radeon R9 290X (1000 MHz GPU/1250 MHz vRAM – AMD Catalyst 14.7 R3)

- NVIDIA GeForce GTX 980 (116 MHz GPU/1216 MHz Boost/1750 MHz vRAM – Forceware 344.47)

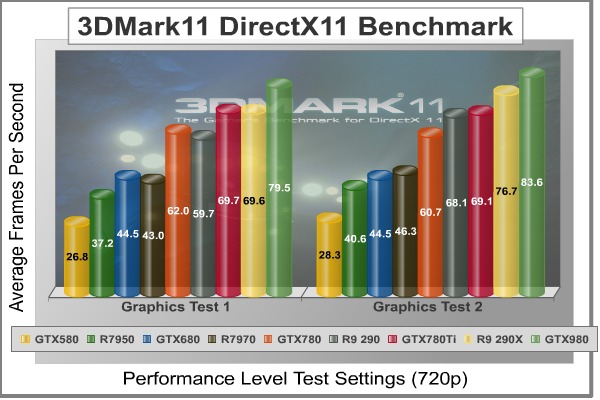

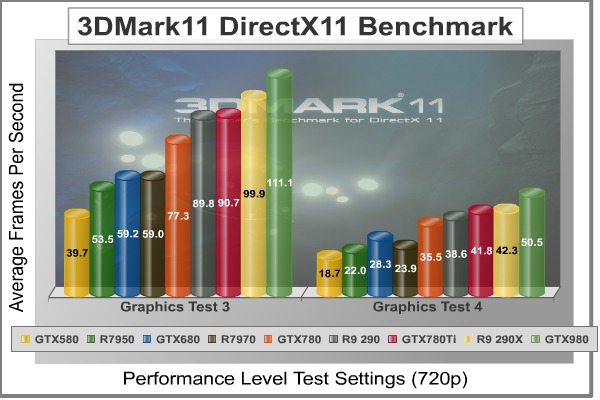

FutureMark 3DMark11 is the latest addition the 3DMark benchmark series built by FutureMark corporation. 3DMark11 is a PC benchmark suite designed to test the DirectX-11 graphics card performance without vendor preference. Although 3DMark11 includes the unbiased Bullet Open Source Physics Library instead of NVIDIA PhysX for the CPU/Physics tests, Benchmark Reviews concentrates on the four graphics-only tests in 3DMark11 and uses them with medium-level ‘Performance’ presets.

The ‘Performance’ level setting applies 1x multi-sample anti-aliasing and trilinear texture filtering to a 1280x720p resolution. The tessellation detail, when called upon by a test, is preset to level 5, with a maximum tessellation factor of 10. The shadow map size is limited to 5 and the shadow cascade count is set to 4, while the surface shadow sample count is at the maximum value of 16. Ambient occlusion is enabled, and preset to a quality level of 5.

- Futuremark 3DMark11 Professional Edition

- Settings: Performance Level Preset, 1280×720, 1x AA, Trilinear Filtering, Tessellation level 5)

3DMark11 Benchmark Test Results

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

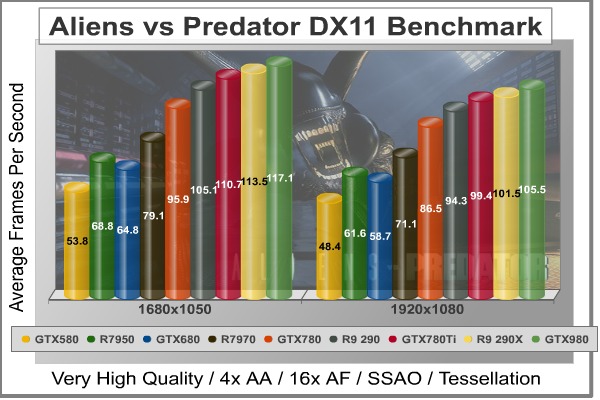

Aliens vs. Predator is a science fiction first-person shooter video game, developed by Rebellion, and published by Sega for Microsoft Windows, Sony PlayStation 3, and Microsoft Xbox 360. Aliens vs. Predator utilizes Rebellion’s proprietary Asura game engine, which had previously found its way into Call of Duty: World at War and Rogue Warrior. The self-contained benchmark tool is used for our DirectX-11 tests, which push the Asura game engine to its limit.

In our benchmark tests, Aliens vs. Predator was configured to use the highest quality settings with 4x AA and 16x AF. DirectX-11 features such as Screen Space Ambient Occlusion (SSAO) and tessellation have also been included, along with advanced shadows.

- Aliens vs Predator

- Settings: Very High Quality, 4x AA, 16x AF, SSAO, Tessellation, Advanced Shadows

Aliens vs Predator Benchmark Test Results

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

Batman: Arkham City is a 3d-person action game that adheres to story line previously set forth in Batman: Arkham Asylum, which launched for game consoles and PC back in 2009. Based on an updated Unreal Engine 3 game engine, Batman: Arkham City enjoys DirectX 11 graphics which uses multi-threaded rendering to produce life-like tessellation effects. While gaming console versions of Batman: Arkham City deliver high-definition graphics at either 720p or 1080i, you’ll only get the high-quality graphics and special effects on PC.

In an age when developers give game consoles priority over PC, it’s becoming difficult to find games that show off the stunning visual effects and lifelike quality possible from modern graphics cards. Fortunately Batman: Arkham City is a game that does amazingly well on both platforms, while at the same time making it possible to cripple the most advanced graphics card on the planet by offering extremely demanding NVIDIA 32x CSAA and full PhysX capability. Also available to PC users (with NVIDIA graphics) is FXAA, a shader based image filter that achieves similar results to MSAA yet requires less memory and processing power.

Batman: Arkham City offers varying levels of PhysX effects, each with its own set of hardware requirements. You can turn PhysX off, or enable ‘Normal levels which introduce GPU-accelerated PhysX elements such as Debris Particles, Volumetric Smoke, and Destructible Environments into the game, while the ‘High’ setting adds real-time cloth and paper simulation. Particles exist everywhere in real life, and this PhysX effect is seen in many aspects of game to add back that same sense of realism. For PC gamers who are enthusiastic about graphics quality, don’t skimp on PhysX. DirectX 11 makes it possible to enjoy many of these effects, and PhysX helps bring them to life in the game.

- Batman: Arkham City

- Settings: 8x AA, 16x AF, MVSS+HBAO, High Tessellation, Extreme Detail, PhysX Disabled

Batman: Arkham City Benchmark Test Results

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

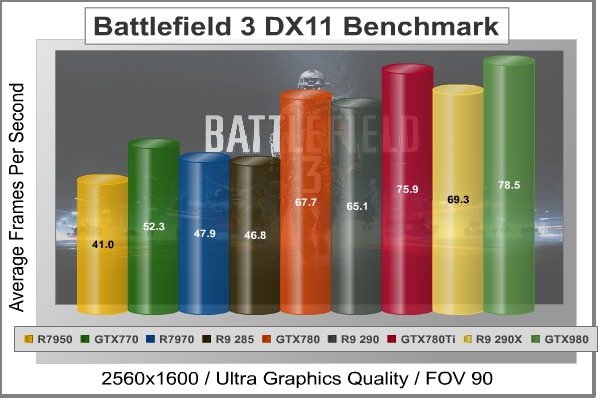

In Battlefield 3, players step into the role of the Elite U.S. Marines. As the first boots on the ground, players will experience heart-pounding missions across diverse locations including Paris, Tehran and New York. As a U.S. Marine in the field, periods of tension and anticipation are punctuated by moments of complete chaos. As bullets whiz by, walls crumble, and explosions force players to the grounds, the battlefield feels more alive and interactive than ever before.

The graphics engine behind Battlefield 3 is called Frostbite 2, which delivers realistic global illumination lighting along with dynamic destructible environments. The game uses a hardware terrain tessellation method that allows a high number of detailed triangles to be rendered entirely on the GPU when near the terrain. This allows for a very low memory footprint and relies on the GPU alone to expand the low res data to highly realistic detail.

Using Fraps to record frame rates, our Battlefield 3 benchmark test uses a three-minute capture on the ‘Secure Parking Lot’ stage of Operation Swordbreaker. Relative to the online multiplayer action, these frame rate results are nearly identical to daytime maps with the same video settings.

- BattleField 3

- Settings: Ultra Graphics Quality, FOV 90, 180-second Fraps Scene

Battlefield 3 Benchmark Test Results

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

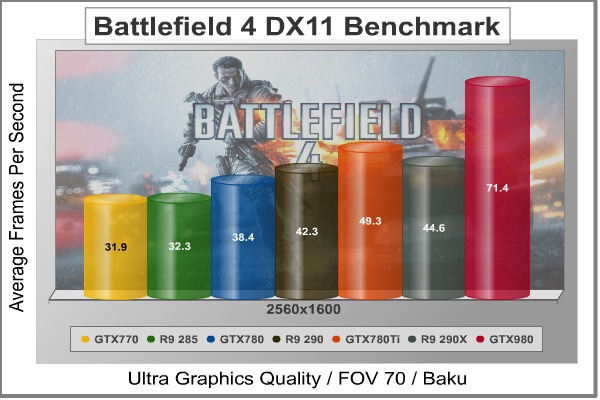

In Battlefield 4, players step into the role of a U.S. Marines named Recker who leads a special operations unit called ‘Tombstone’. While the single-player campaign offers a unique and exciting storyline, BF4 truly shine with a new 64-player online multiplayer mode with large spacious maps.

The graphics engine behind Battlefield 4 is called Frostbite 3, which offers more realistic environments with higher resolution textures and next-generation particle effects. A first-time ‘networked water’ fluid system allows players in the game to see the same wave at the same time. Tessellation has also been improved since Frostbite 2 in BF3.

AMD graphics cards are optimized for Battlefield 4 using AMD’s Mantle API that enables a boost in performance.

Using Fraps to record frame rates, our Battlefield 4 benchmark test uses a three-minute capture on the ‘Baku’ stage where Recker is handed the tactical binoculars. Relative to the online multiplayer action, these frame rate results are nearly identical to most large maps with the same video settings.

- BattleField 4

- Settings: Ultra Graphics Quality, FOV 70, 180-second Fraps Scene

Battlefield 4 Benchmark Test Results

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

Lost Planet 2 is the second installment in the saga of the planet E.D.N. III, ten years after the story of Lost Planet: Extreme Condition. The snow has melted and the lush jungle life of the planet has emerged with angry and luscious flora and fauna. With the new environment comes the addition of DirectX-11 technology to the game.

Lost Planet 2 takes advantage of DX11 features including tessellation and displacement mapping on water, level bosses, and player characters. In addition, soft body compute shaders are used on ‘Boss’ characters, and wave simulation is performed using DirectCompute. These cutting edge features make for an excellent benchmark for top-of-the-line consumer GPUs.

The Lost Planet 2 benchmark offers two different tests, which serve different purposes. This article uses tests conducted on benchmark B, which is designed to be a deterministic and effective benchmark tool featuring DirectX 11 elements.

- Lost Planet 2 Benchmark 1.0

- Settings: Benchmark B, 4x AA, Blur Off, High Shadow Detail, High Texture, High Render, High DirectX 11 Features

Lost Planet 2 Benchmark Test Results

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

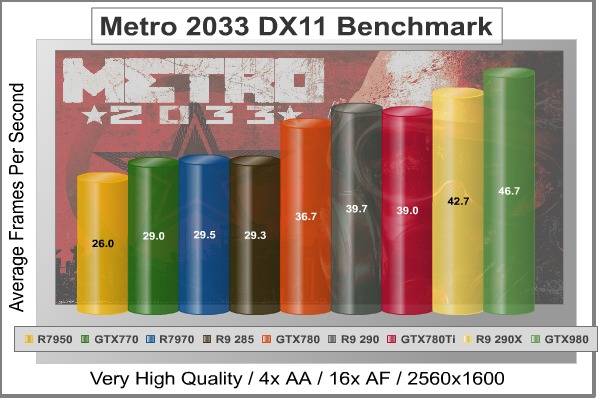

Metro 2033 is an action-oriented video game with a combination of survival horror, and first-person shooter elements. The game is based on the novel Metro 2033 by Russian author Dmitry Glukhovsky. It was developed by 4A Games in Ukraine and released in March 2010 for Microsoft Windows. Metro 2033 uses the 4A game engine, developed by 4A Games. The 4A Engine supports DirectX-9, 10, and 11, along with NVIDIA PhysX and GeForce 3D Vision.

The 4A engine is multi-threaded in such that only PhysX had a dedicated thread, and uses a task-model without any pre-conditioning or pre/post-synchronizing, allowing tasks to be done in parallel. The 4A game engine can utilize a deferred shading pipeline, and uses tessellation for greater performance, and also has HDR (complete with blue shift), real-time reflections, color correction, film grain and noise, and the engine also supports multi-core rendering.

Metro 2033 featured superior volumetric fog, double PhysX precision, object blur, sub-surface scattering for skin shaders, parallax mapping on all surfaces and greater geometric detail with a less aggressive LODs. Using PhysX, the engine uses many features such as destructible environments, and cloth and water simulations, and particles that can be fully affected by environmental factors.

NVIDIA has been diligently working to promote Metro 2033, and for good reason: it’s one of the most demanding PC video games we’ve ever tested. When their flagship GeForce GTX 480 struggles to produce 27 FPS with DirectX-11 anti-aliasing turned two to its lowest setting, you know that only the strongest graphics processors will generate playable frame rates. All of our tests enable Advanced Depth of Field and Tessellation effects, but disable advanced PhysX options.

- Metro 2033 Benchmark

- Settings: Very-High Quality, 4x AA, 16x AF, Tessellation, PhysX Disabled

Metro 2033 Benchmark Test Results

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

The Unigine Heaven benchmark is a free publicly available tool that grants the power to unleash the graphics capabilities in DirectX-11 for Windows 7 or updated Vista Operating Systems. It reveals the enchanting magic of floating islands with a tiny village hidden in the cloudy skies. With the interactive mode, emerging experience of exploring the intricate world is within reach. Through its advanced renderer, Unigine is one of the first to set precedence in showcasing the art assets with tessellation, bringing compelling visual finesse, utilizing the technology to the full extend and exhibiting the possibilities of enriching 3D gaming.

The distinguishing feature in the Unigine Heaven benchmark is a hardware tessellation that is a scalable technology aimed for automatic subdivision of polygons into smaller and finer pieces, so that developers can gain a more detailed look of their games almost free of charge in terms of performance. Thanks to this procedure, the elaboration of the rendered image finally approaches the boundary of veridical visual perception: the virtual reality transcends conjured by your hand.

Since only DX11-compliant video cards will properly test on the Heaven benchmark, only those products that meet the requirements have been included.

- Unigine Heaven Benchmark 3.0

- Settings: DirectX 11, High Quality, Extreme Tessellation, 16x AF, 4x AA

Heaven Benchmark Test Results

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

| Graphics Card | GeForce GTX580 | Radeon HD7950 | GeForce GTX680 | Radeon HD7970 | GeForce GTX780 | Radeon R9 290 | GeForce GTX780Ti | Radeon R9 290X | GeForce GTX980 |

| GPU Cores | 512 | 1792 | 1536 | 2048 | 2304 | 2560 | 2880 | 2816 | 2048 |

| Core Clock (MHz) | 772 | 850 | 1006 | 925 | 863 | 947 | 876 | 1000 | 1126 |

| Shader Clock (MHz) | 1544 | N/A | 1058 Boost | N/A | Boost 902 | N/A | Boost 928 | N/A | Boost 1216 |

| Memory Clock (MHz) | 1002 | 1250 | 1502 | 1375 | 1502 | 1250 | 1750 | 1250 | 1750 |

| Memory Amount | 1536MB GDDR5 | 3072MB GDDR5 | 2048MB GDDR5 | 3072MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 3072MB GDDR5 | 4096MB GDDR5 | 4096MB GDDR5 |

| Memory Interface | 384-bit | 384-bit | 256-bit | 384-bit | 384-bit | 512-bit | 384-bit | 512-bit | 256-bit |

- BattleField 3 Campaign

- Settings: 2560×1600 Resolution, Ultra Graphics Quality, FOV 90, 180-second Fraps Scene

Battlefield 3 Benchmark Test Results

Battlefield 3 Benchmark Test Results

- BattleField 4 Campaign

- Settings: Ultra Graphics Quality, FOV 70, 180-second Fraps Scene

-

DX11: Metro 2033 Benchmark

-

Settings: 2560×1600 Resolution, Very-High Quality, 4x AA, 16x AF, Tessellation, PhysX Disabled

Metro 2033 Benchmark Test Results

- Unigine Heaven Benchmark 3.0

- Settings: 2560×1600 Resolution, DirectX 11, High Quality, Extreme Tessellation, 16x AF, 4x AA

Heaven Benchmark Test Results

Heaven Benchmark Test Results

In this section, PCI-Express graphics cards are isolated for idle and loaded electrical power consumption. In our power consumption tests, Benchmark Reviews utilizes an 80-PLUS GOLD certified OCZ Z-Series Gold 850W PSU, model OCZZ850. This power supply unit has been tested to provide over 90% typical efficiency by Chroma System Solutions. To measure isolated video card power consumption, Benchmark Reviews uses the Kill-A-Watt EZ (model P4460) power meter made by P3 International. In this particular test, all power consumption results were verified with a second power meter for accuracy.

The power consumption statistics discussed in this section are absolute maximum values, and may not represent real-world power consumption created by video games or graphics applications.

A baseline measurement is taken without any video card installed on our test computer system, which is allowed to boot into Windows 7 and rest idle at the login screen before power consumption is recorded. Once the baseline reading has been taken, the graphics card is installed and the system is again booted into Windows and left idle at the login screen before taking the idle reading. Loaded power consumption reading is taken with the video card running a stress test using graphics test #4 on 3DMark11 for real-world results, and again using FurMark for maximum consumption values.

This section discusses power consumption for the NVIDIA GeForce GTX 980 video card, which operates at reference clock speeds. These power consumption results are not representative of the entire GTX 980-series product family, which may feature a modified design or utilize factory overclocking. GeForce GTX 980 requires two 6-pin PCI-E power connections for normal operation, and will not activate the display unless proper power has been supplied. NVIDIA recommends a 600W power supply unit for stable operation with one GeForce GTX 980 video card installed.

Measured at the lowest reading, GeForce GTX 980 consumed a mere 10W at idle. NVIDIA’s average TDP is specified as 165W, however our real-world stress tests using 3D Mark Vantage caused this video card to consume 260 watts… which is modest compared to 380W for AMD Radeon R9 290X.

These results position the GeForce GTX 980 as the least power-hungry, yet most powerful top-end video cards we’ve tested under load. If you’re familiar with electronics, it will come as no surprise that less power consumption equals less heat output as evidenced by our thermal results below…

This section reports our temperature results subjecting the video card to maximum load conditions. During each test a 20°C ambient room temperature is maintained from start to finish, as measured by digital temperature sensors located outside the computer system. GPU-Z is used to measure the temperature at idle as reported by the GPU, and also under load.

Using a modified version of FurMark’s “Torture Test” to generate maximum thermal load, peak GPU temperature is recorded in high-power 3D mode. FurMark does two things extremely well: drives the thermal output of any graphics processor much higher than any video games realistically could, and it does so with consistency every time. Furmark works great for testing the stability of a GPU as the temperature rises to the highest possible output.

The temperatures illustrated below are absolute maximum values, and do not represent real-world temperatures created by video games or graphics applications:

| Video Card | Ambient | Idle Temp | Loaded Temp | Max Noise | ||||

| ATI Radeon HD 5850 | 20°C | 39°C | 73°C | 7/10 | ||||

| NVIDIA GeForce GTX 460 | 20°C | 26°C | 65°C | 4/10 | ||||

| AMD Radeon HD 6850 | 20°C | 42°C | 77°C | 7/10 | ||||

| AMD Radeon HD 6870 | 20°C | 39°C | 74°C | 6/10 | ||||

| ATI Radeon HD 5870 | 20°C | 33°C | 78°C | 7/10 | ||||

| NVIDIA GeForce GTX 560 Ti | 20°C | 27°C | 78°C | 5/10 | ||||

| NVIDIA GeForce GTX 570 | 20°C | 32°C | 82°C | 7/10 | ||||

| ATI Radeon HD 6970 | 20°C | 35°C | 81°C | 6/10 | ||||

| NVIDIA GeForce GTX 580 | 20°C | 32°C | 70°C | 6/10 | ||||

| NVIDIA GeForce GTX 590 | 20°C | 33°C | 77°C | 6/10 | ||||

| AMD Radeon HD 6990 | 20°C | 40°C | 84°C | 8/10 | ||||

| NVIDIA GeForce GTX 650 Ti BOOST | 20°C | 26°C | 73°C | 4/10 | ||||

| NVIDIA GeForce GTX 650 Ti | 20°C | 26°C | 62°C | 3/10 | ||||

| NVIDIA GeForce GTX 670 | 20°C | 26°C | 71°C | 3/10 | ||||

| NVIDIA GeForce GTX 680 | 20°C | 26°C | 75°C | 3/10 | ||||

| NVIDIA GeForce GTX 690 | 20°C | 30°C | 81°C | 4/10 | ||||

| NVIDIA GeForce GTX 780 | 20°C | 28°C | 80°C | 3/10 | ||||

| Sapphire Radeon R9 270X Vapor-X | 20°C | 26°C | 68°C | 4/10 | ||||

| XFX Radeon R9 285 DD | 20°C | 27°C | 62°C | 4/10 | ||||

| XFX Radeon R9 290 DD | 20°C | 30°C | 90°C | 4/10 | ||||

| MSI Radeon R9 290X | 20°C | 34°C | 95°C | 8/10 | ||||

| NVIDIA GeForce GTX 780 Ti | 20°C | 31°C | 82°C | 3/10 | ||||

| NVIDIA GeForce GTX 980 | 20°C | 30°C | 64°C | 4/10 |

As we’ve mentioned on the pages leading up to this section, NVIDIA’s Maxwell architecture yields a much more efficient operating GPU compared to previous designs. This becomes evident by the low idle temperature, and translates into barely lukewarm fully-load temperatures.

While NVIDIA’s reference design works exceptionally well at cooling the GM204 GPU on GeForce GTX 980, consumers should expect add-in card partners to market custom-cooled versions for an extra premium. 64°C after ten minutes at 100% load using Furmark’s Torture Test is nothing at all, as illustrated by the chart above.

IMPORTANT: Although the rating and final score mentioned in this conclusion are made to be as objective as possible, be advised that every author perceives these factors differently. While we each do our best to ensure that all aspects of the product are considered, there are often times unforeseen market conditions and manufacturer revisions that occur after publication which could render our rating obsolete. Please do not base any purchase solely on this conclusion, as it represents our rating specifically for the product tested which may differ from future versions. Benchmark Reviews begins our conclusion with a short summary for each of the areas that we rate.

My ratings begin with performance, where the $549 NVIDIA GeForce GTX 980 competes against the rest of the market in an unfairly matched comparison. With the launch of GTX 980, NVIDIA will discontinue GTX 780 Ti, 780, and 770. Gamers will most likely compare GeForce GTX 980 to the AMD Radeon R9 290X, because it’s their single-GPU flagship that costs about $499. While there’s a $50 price difference between these two products, there’s a lot more than money separating them. In our DirectX 11 tests the NVIDIA GeForce GTX 980 unconditionally outperformed every other single-GPU graphics card on the market. Judging by the results, GTX 980 accomplished its goal of 200% performance over GTX 680, while still easily surpassing the AMD Radeon R9 290X and GTX 780 Ti in our benchmark FPS tests without exception.

Synthetic benchmark tools offered an unbiased rating for graphics products, allowing video card manufacturers to display their performance without special game optimizations or significant driver influence. Futuremark’s 3DMark11 benchmark suite strained our high-end graphics cards with only mid-level settings displayed at 720p, yet GeForce GTX 980 still averaged 81.2 FPS between the four tests while Radeon R9 290X averaged 72.1 and GTX 780 Ti averaged 67.8 FPS. In contrast, the GeForce GTX 680 averaged only 44.1 FPS between the four tests. Unigine Heaven 3.0 benchmark tests used maximum settings that tend to crush most products, yet the NVIDIA GeForce GTX 980 produced a commendable 85.3 FPS at 1920×1080 compared to around 73 FPS for GTX 780 Ti and R9 290X. GeForce GTX 680 was crushed by GTX 980, producing only 46.2 FPS at this resolution.

Using a collection of various DirectX 11 games helped to show other performance disparities, especially when one vendor contributed to game development with optimizations for their product. In Aliens vs Predator, which is used to illustrate simple performance differences, GeForce GTX 980 produced 105.5 FPS compared to only 58.7 for GTX 680. Moderately demanding DX11 games such as Batman: Arkham City produced 122 FPS on GTX 980, while GTX 680 mustered 78 FPS. Battlefield 3, a game co-developed with NVIDIA support, generated 129.6 FPS on the GTX 980 with ultra quality settings, while the R9 290X trailed behind with 113.7 FPS, GTX 780 Ti with 107.0, and GeForce GTX 680 with only 75.4 FPS. Playing BF3 at 2590×1600 resolution, GTX 780 Ti nearly kept pace with GTX 980 while R9 290X lagged behind. Lost Planet 2 is another demanding game co-developed with NVIDIA support, enabling GeForce GTX 980 to maintain a 20 FPS lead over Radeon R9 290X at 1920×1080. The Frostbite 3 engine in Battlefield 4 received AMD developer support for this game sequel, which kept GTX 780 Ti and R9 290X neck-and-neck while the GeForce GTX 980 stepped way ahead of them both at every resolution. Metro 2033 is another demanding game co-developed with AMD support that requires tremendous graphics power to enjoy high quality visual settings, helping to shrink GTX 980’s lead over R9 290X while still nearly twice the performance of GTX 680.

Beyond the raw frame rate performance results between NVIDIA products, there’s a incredible difference between the architecture of their GeForce GTX products. If you just look at benchmark results, you’ll see the obvious increase in performance with each new generation. What you won’t see are the major differences in performance per watt, where the energy efficiency climbs from Fermi to Kepler and now Maxwell. With GM204 gamers not only get more graphical processing power, but they get it at half the energy demands, which is to say that Maxwell-based video cards operate with less heat output and are kept under better control by the card’s thermal management system.

Appearance is a much more subjective matter, especially since this particular rating doesn’t have any quantitative benchmark scores to fall back on. NVIDIA’s GeForce GTX series has traditionally used a recognizable design over the past two years with GeForce GTX TITAN and GTX 780 series. Keeping with an ‘industrial’ look, GeForce 980 uses identical matte silver trim for accents and differentiates itself with an imprinted model number. Since GeForce GTX 980 operates so efficiently and allows nearly all of the heated air to exhaust outside of the computer case, the reference cooling design does an excellent job managing thermal load for the GPU. While fashionably good looks help lure consumers, also keep in mind that this product seriously outperforms all the competition while consuming less power, generating far less heat, and producing very little noise during operation.

As the industry leader, construction is one area NVIDIA graphics cards continually shine. Thanks in part to extremely quiet operation paired with more efficient Maxwell GM204 processor cores that consume less energy and emit less heat, it seems clear that GeForce GTX 980 continues to carry on this tradition. Requiring only two 6-pin PCI-E power connections reduce power supply requirements to 600W, which is practically mainstream for most enthusiast systems. Additionally, consumers have a top-end single-GPU solution capable of driving three monitors in 3D Vision Surround with the inclusion of Ultra 4K-compatible HDMI 2.0, DisplayPort 2.1, and digital DL-DVI video outputs.

As of launch day (18 September 2014), the NVIDIA GeForce GTX 980 graphics card is expected to sell with a starting price of $549.99 (MSRP), which is $50 LESS than the AMD Radeon R9 290X and also $150 less than when the (now discontinued) GTX 780 Ti debuted a year ago. Many versions of GeForce GTX 980 are available for sale online, priced at $534.99 (Amazon | B&H Photo). Keep in mind that hardware manufacturers and retailers are constantly adjusting prices, so expect these numbers to change a few times in the months after launch. For the money, you’re getting top-end frame rate performance, but there’s still plenty of value delivered beyond that as the additional NVIDIA Maxwell features run off the charts. Only NVIDIA Maxwell graphics cards can offer Multi-Frame Sampled AA (MFAA), Voxel Global Illumination (VXGI), 4K NVIDIA ShadowPlay, and Dynamic Super Resolution (DSR), which join previously released features such as FXAA, TXAA, G-SYNC, GPU Boost 2.0, 3D Vision, and PhysX technology.

In conclusion, GeForce GTX 980 is the energy-efficient version of GTX TITAN with a decisive lead beyond what AMD’s Radeon R9 290X can produce. Even if it were possible for the competition to retool and overclock R9 290X to reach similar frame rate performance, which it’s not, temperatures and noise would still very heavily favor GeForce GTX 980. There is a modest price difference between them, but quite frankly, the competition doesn’t belong in the same class and will likely see major price reductions to remain relevant. In contrast, GTX 980 practically doubles what GTX 680 was capable of without the same demands on energy. GeForce GTX 980 delivers performance beyond expectations, while Maxwell GM204 offers a myriad of proprietary technologies that dramatically improve efficiency, enhance the user experience, and challenges game developers to build even more realism into their titles.

+ Crushes AMD Radeon R9 290X

+ Outstanding DX11 video games performance

+ Supports MFAA, FXAA, and TXAA

+ Supports Voxel Global Illumination (VXGI)

+ Triple-display and 3D Vision Surround support

+ Cooling fan operates at very quiet acoustic levels

+ Features HDMI 2.0, DisplayPort 1.2, Ultra 4K support

+ Very low power consumption at idle and heat output under load

+ Upgradable into dual- and triple SLI card sets

– Very expensive enthusiast product

-

Performance: 10.0

-

Appearance: 9.00

-

Construction: 9.50

-

Functionality: 10.0

-

Value: 7.00

Excellence Achievement: Benchmark Reviews Golden Tachometer Award.

COMMENT QUESTION: Who do you support: NVIDIA or AMD?

3 thoughts on “NVIDIA GeForce GTX 980 Video Card Review”

Thanks for this review. I am glad I waited a bit longer with building my new system. This card seems beyond belief. Every time I think it can’t get any better and then this monster card appears.

I hardly think it “crushed” the R9 290X, however it did thrash the GTX 680, so it makes sense to upgrade if you have an older Nvidia card.

It isn’t that much faster than the 780TI. The big attraction is that the power consumption is much lower (more OC headroom) and the lower price.

I think it may be worth waiting to see what AMD has to offer this generation and for “Big Maxwell” to arrive.

Comments are closed.